@article{Gerstenecker2026Fail2Drive,

author={Gerstenecker, Simon and Geiger, Andreas and Renz, Katrin},

title={Fail2Drive: Benchmarking Closed-Loop Driving Generalization},

journal={arXiv preprint arXiv:2604.08535},

year={2026}

}Katrin Renz

Hi! I am building a startup in physical AI. Previously, I completed my PhD in the Autonomous Vision Group (AVG) as part of the International Max Planck Research School for Intelligent Systems (IMPRS-IS), advised by Andreas Geiger. During my PhD I worked on end-to-end autonomous driving with a focus on combining vision, action and language.

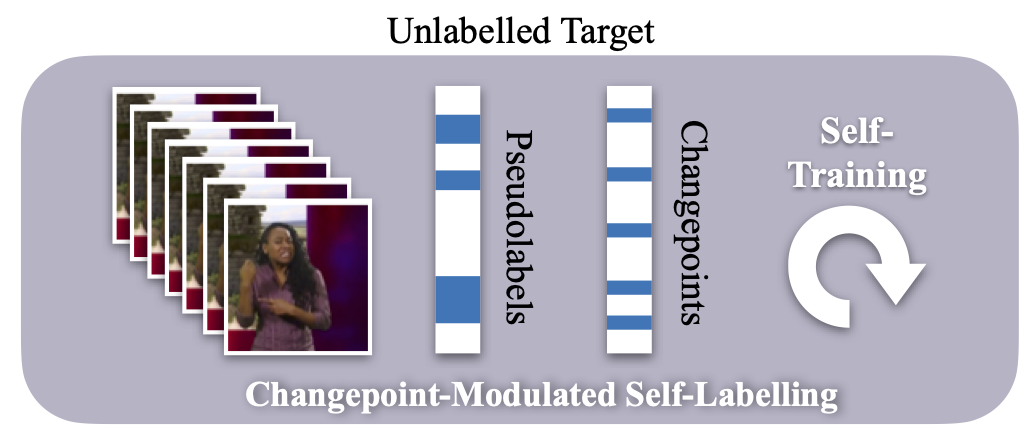

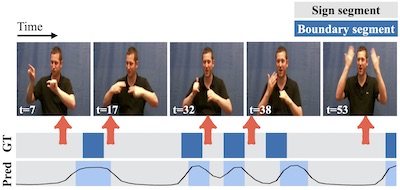

I completed my master's degree at Heilbronn University where I worked with Nicolaj Stache. For my master's thesis I spent time at VGG working on sign language segmentation with Gül Varol and Samuel Albanie.